PDU Remote Management

PDU Remote Management

PDUs (power distribution units) and busways are critical network infrastructure devices that control and optimize how power flows to equipment like servers, routers, firewalls, and switches. They’re difficult to manage remotely, so configuring and updating new devices or fixing problems typically requires tedious, on-site work. This difficulty is magnified in complex, distributed networks with hundreds of individual power devices that must be managed one at a time. What’s needed is a PDU remote management solution that unifies control over distributed devices. It should also streamline infrastructure management with an open architecture that supports third-party power software and automation.

The problem: PDU management is cumbersome for large, distributed networks

PDUs and busways are deployed across remote and distributed locations beyond the central data center, including edge computing sites, automated manufacturing plants, and colocations. They typically aren’t network-connected and do not come with up-to-date firmware at deployment time, requiring on-site technicians for maintenance. Upgrading and managing thousands of PDUs and busways requires hundreds of work hours from on-site IT teams who must manually connect to each unit.

The current solution: PDU remote management with jump boxes or serial consoles

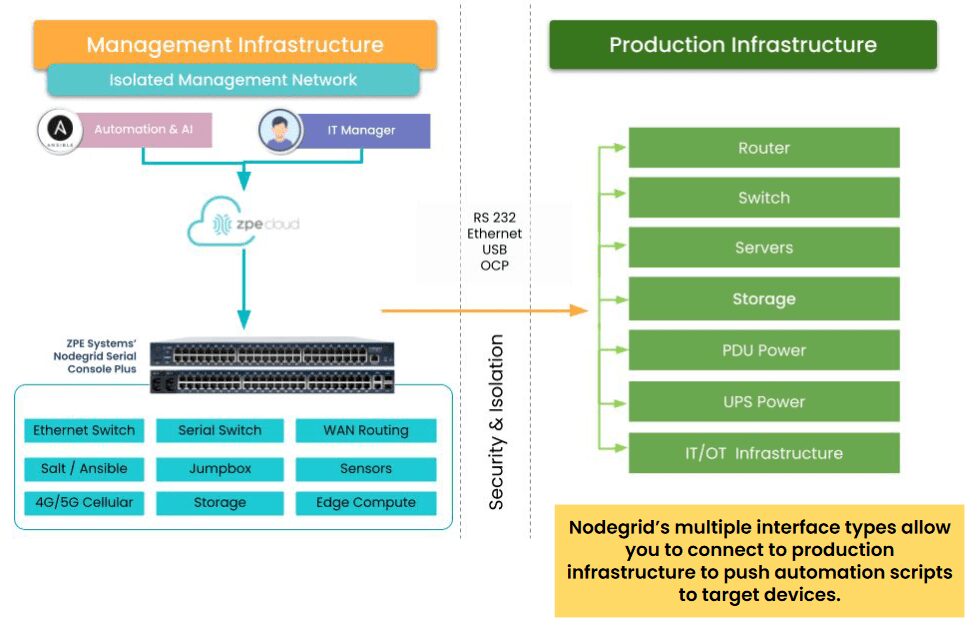

Since most PDUs and busways can’t connect to the network, the only way to remotely manage them is to physically connect them via serial (a.k.a., RS-232) cable to a device that can be remotely accessed, such as an Intel NUC jump box or a serial console.

Unfortunately, jump boxes usually aren’t set up to manage more than one serial connection at a time, so they only solve the remote access problem without providing any centralized management of multiple PDUs or multiple sites. Jump boxes are often deployed without antivirus or other security software installed and with insecure, unpatched operating systems containing potential vulnerabilities, leaving branch networks exposed.

On the other hand, serial consoles can manage multiple serial devices at once and provide remote access, but they often don’t integrate with PDU/busway software and only support a few chosen vendors, which limits their control capabilities and may prevent remote firmware updates. They’re also usually single-purpose devices that take up valuable rack space in remote sites with limited real estate and don’t interoperate with third-party software for automation, monitoring, and security.

The Hive SR + ZPE Cloud: A next-gen PDU remote management solution

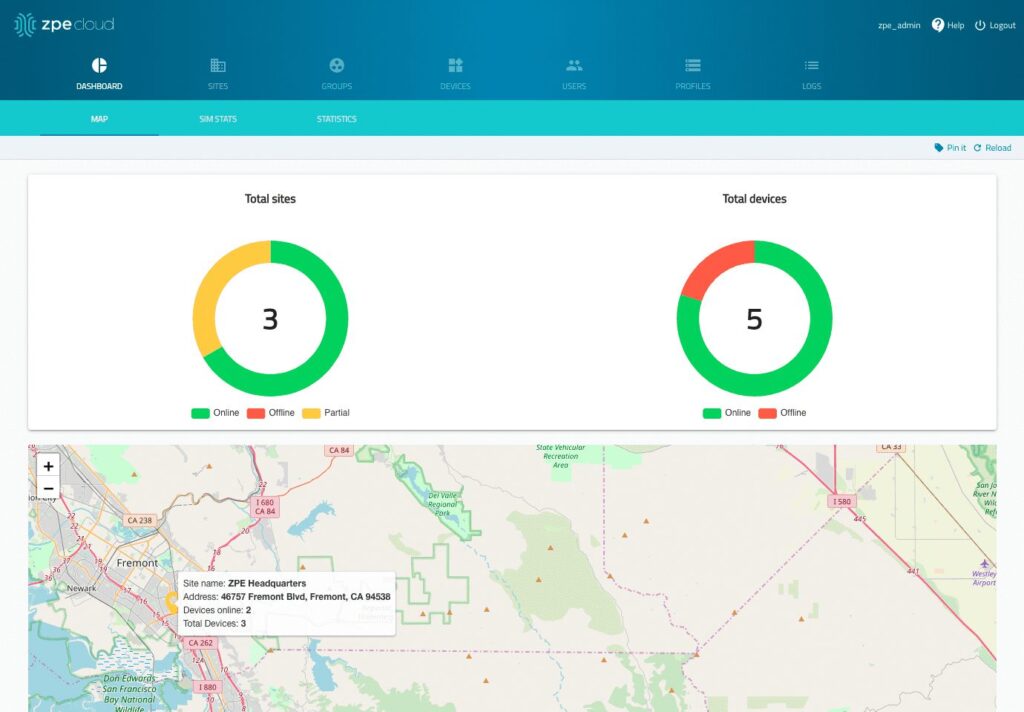

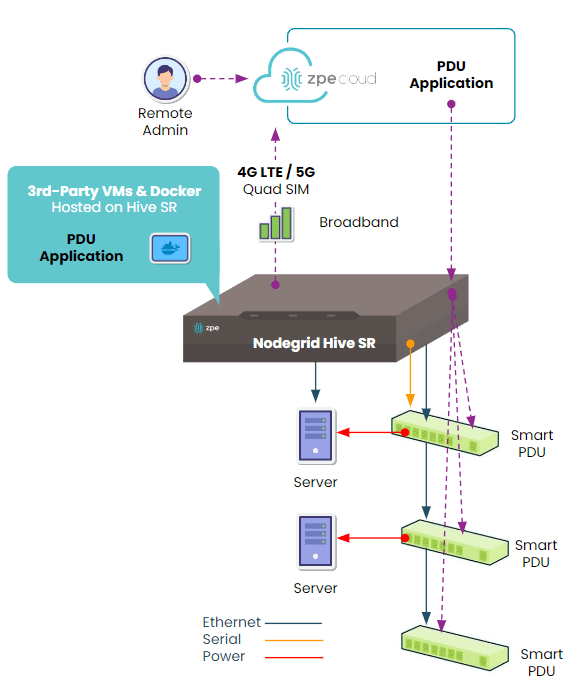

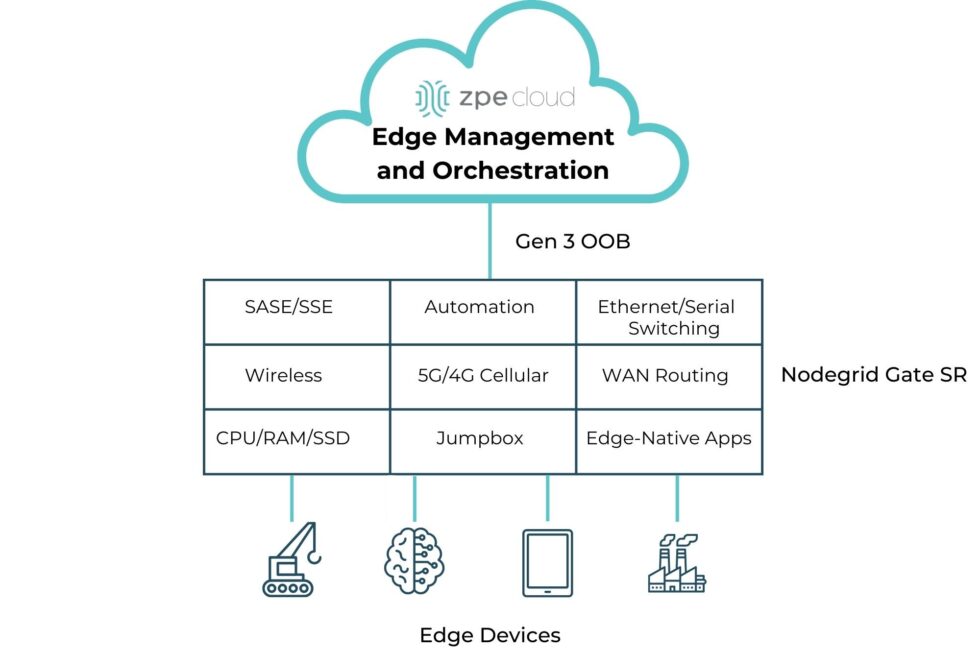

The Hive SR is an integrated branch services router from the Nodegrid family of vendor-neutral infrastructure management solutions offered by ZPE Systems. The Hive automatically discovers power devices and provides secure remote access, eliminating the need to manage PDUs and busways on-site. The ZPE Cloud management platform gives IT teams centralized control over power devices and other infrastructure at all distributed locations so they can update or roll-back firmware, configure and power-cycle equipment, and see monitoring alerts.

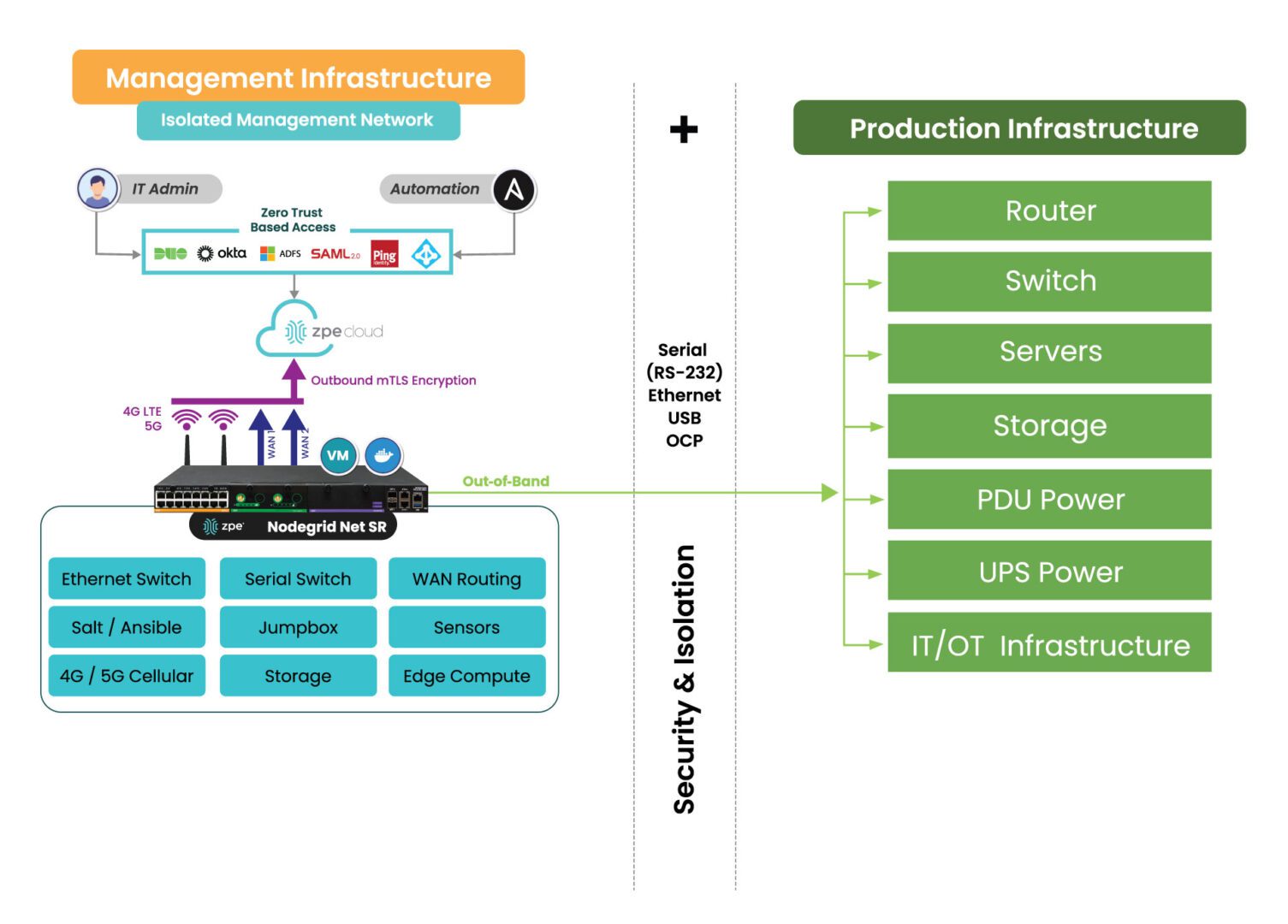

In addition to integrated branch networking capabilities like gateway routing, switching, firewall, Wi-Fi access point, 5G/4G cellular WAN failover, and centralized infrastructure control, the Hive SR and ZPE Cloud also deliver vendor-neutral out-of-band (OOB) management. ZPE’s Gen 3 OOB solution creates an isolated management network that doesn’t rely on production resources and, as such, remains remotely accessible during major outages, ransomware infections, and other adverse events. This gives IT teams a lifeline to perform remote recovery actions, including rolling-back PDU firmware updates, power-cycling hung devices, and rebuilding infected systems, without the time and expense of an on-site visit.

The Hive and ZPE Cloud have open architectures that can host or integrate other vendors’ software for PDU/busway management, NetOps automation, zero-trust and SASE security, and more. Administrators get a single, unified, cloud-based platform to orchestrate both automated and manual workflows for PDUs, busways, and any other Nodegrid-connected infrastructure at all distributed business sites. Plus, all ZPE solutions are frequently patched and protected by industry-leading security features to defend your critical branch infrastructure.

Download

Download our Centralized IT Infrastructure Management and Orchestration solution guide to learn how ZPE Cloud can improve your operational efficiency and resilience.

Download